Not trying to cast stones but sharing this for the broader community but the official stance now is no 2.x releases. Not even backports, fixes or any improvements to ease migrations. So makes no sense @dupontbertrand for you to try to get readings on performance. Drop 2.x.

Fiooou, quite a Saturday. Here’s what happened today on the benchmark front.

Dedicated server

We now have a Hetzner CPX42 (8 vCPU AMD EPYC, 16 GB RAM, Nuremberg) running nothing but benchmarks. No desktop, no GUI, no cron jobs, no shared runners. Just Meteor, MongoDB, and Artillery.

MongoDB 7.0 and Node 22 — same versions as Meteor’s dev bundle.

Server tuning

Getting reliable numbers took a few iterations. Here’s what we ended up with:

- CPU pinning — Meteor gets cores 0-3, Artillery/Chromium gets cores 4-7 via

taskset. They don’t fight for CPU anymore. - MongoDB restart between each scenario — clears WiredTiger cache and open cursors so each scenario starts fresh.

- Filesystem cache drop between scenarios —

echo 3 > /proc/sys/vm/drop_caches - 5-second cooldown between scenarios for the OS to settle

- Parasitic services disabled — apt-daily, fstrim, motd, all turned off

This made a huge difference. Our early runs had GC max pauses of 5+ seconds that turned out to be just noise from lack of isolation. With the setup above, the same metric dropped to ~40ms. Lesson learned: benchmark methodology matters more than the numbers themselves.

Two repos

We split things into two private repos:

- bench-dashboard — the Blaze dashboard app, deployed automatically to Galaxy via push-to-deploy

- meteor-bench-agent — the benchmark CLI, scenarios, collectors, and the polling agent that runs on the server

The old performance repo is archived.

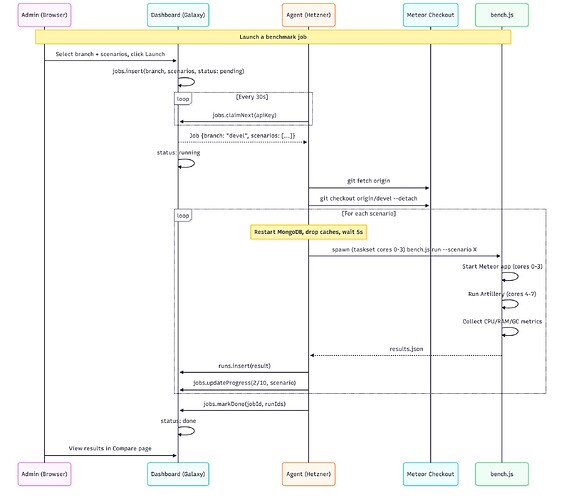

How it all connects

The agent polls every 30s, picks up pending jobs, runs them sequentially, and pushes results back. There’s an admin page where you can select a branch from a dropdown (synced from the Meteor repo — all 1,900+ branches), pick scenarios, and hit launch.

Dashboard updates

- Compare page now shows all scenarios side by side with the run date in the header, so you can see if you’re comparing runs from the same day

- Scenario names are clickable in the compare view

- Tooltips on each metric explaining what it measures

- Copy JSON button for easy data export

- About page rewritten with full methodology — CPU pinning, isolation steps, server specs

- Agent status visible in the admin panel (Online/Idle/Offline)

- Job progress in real-time (3/10 reactive-light, etc.)

First real comparison: devel vs release-3.5

All 10 scenarios, 240 concurrent Chromium sessions on the heavy ones. Same machine, same day, full isolation between scenarios.

The headline: release-3.5 has dramatically better GC.

| Scenario | Metric | devel | release-3.5 | Delta |

|---|---|---|---|---|

| reactive-crud (240 browsers) | GC total pause | 4,813 ms | 756 ms | -84% |

| reactive-crud | GC count | 970 | 299 | -69% |

| reactive-crud | Wall clock | 927s | 687s | -26% |

| non-reactive-crud (240 browsers) | GC total pause | 26,929 ms | 5,914 ms | -78% |

| non-reactive-crud | Wall clock | 373s | 267s | -28% |

| ddp-reactive-light (150 DDP) | GC count | 653 | 107 | -84% |

| ddp-reactive-light | RAM avg | 371 MB | 489 MB | +32% |

release-3.5 does 3-5x fewer garbage collections and spends 67-84% less time paused in GC. The trade-off is ~30% more RAM usage — it keeps objects alive longer instead of constantly allocating and collecting. For users, this means fewer micro-freezes and more consistent response times.

The exception — cold start:

| Scenario | devel | release-3.5 |

|---|---|---|

| cold-start | 16s | 36s |

| server bundle | 91 MB | 207 MB |

devel cold-starts in half the time. The server bundle is also half the size. Worth investigating what changed there.

What’s next

- Environment flags — compare sockjs vs uws transport, DISABLE_SOCKJS, etc.

- MongoDB-focused scenarios — read-heavy (100K docs), bulk writes, aggregation pipelines

- Split cold-start into build time vs boot time

The dashboard is public and read-only: https://meteor-benchmark-dashboard.sandbox.galaxycloud.app

Go to Compare, select devel vs release-3.5, and browse all 10 scenarios. The About page has the full methodology if you’re curious about how things are measured.

Would love feedback on what other comparisons would be useful!

thas is really fantastic!

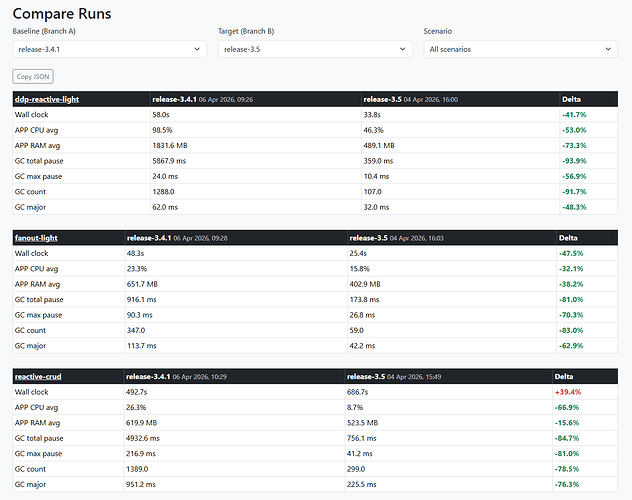

3.4 is the current production version. So 3.5 should not introduce performance regression.

Can we compare 3.4 vs 3.5 ?

Yes, huge diff between this two! ![]() But I made some changes on the server between the 2 runs so I’m restarting the 3.5 scenarios to be sure

But I made some changes on the server between the 2 runs so I’m restarting the 3.5 scenarios to be sure ![]()