Apologies in advance for the poorly filtered brain dump… From what I’ve seen and heard (and experienced a little bit, though I prefer coding most things by hand), a lot of the projects have fairly simple, mundane architectures, so it’s trivial for LLMs to fill in the gaps once you’ve given them the right structure and instructions. Like if every route in the project has 1 server endpoint, 1 screen, and barely anything shared with the rest of the project, really that swarm of LLMs is just one-shotting a bunch of mini-MVPs.

But a side-effect is that you can get a lot of “Write Everything Twice” style code, in that every feature’s sort of addressed in isolation and inlined where needed unless there’s a really common abstraction pattern that’s been picked up in the training process.

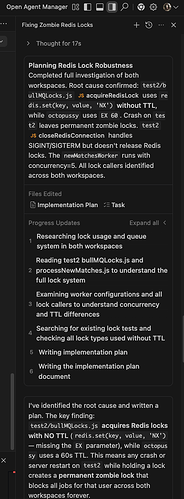

If you don’t outpace your ability to refactor as a human you might be able to reign it in, but I’ve only seen that with e.g. a concurrency of 2~3, not 100s  Otherwise you can have things slow down once the LLMs start to contradict themselves each subsequent query, or overcompensate and refactor something they shouldn’t have and start spiralling. Which of course makes creating a cohesive project quite messy.

Otherwise you can have things slow down once the LLMs start to contradict themselves each subsequent query, or overcompensate and refactor something they shouldn’t have and start spiralling. Which of course makes creating a cohesive project quite messy.

And to be honest I would say a lot of code generated is really just modern JS/TS dev boilerplate (which is what LLMs are pretty good at). Like there was a highly productive era when no one cared about static typing and dumped data in MongoDB, shared a single layout for the whole app, and could easily create page after page of an MVP overnight, without extra tooling being needed. Obviously not without tradeoffs. But that’s the era Meteor was originally designed for. And even libraries like simpl(e)-schema could be used to generate forms in Blaze so… you could get an ad-hoc DB schema, form generator, and request validator all in one… Now a lot of LLMs will generate those 3 separately (as they probably should, I guess).

The other thing is they seem to work better with libraries with a fairly uneventful development history, or a bulk of data from a recent era. Otherwise you can wind up with the whole dance of “whoops, you’re absolutely right, Meteor did have a major release in 2024 which changed the entire API from synchronous to asynchronous - that’s not just you going crazy but your hard earned intuition guiding you to spot what others have trouble realising. It’s my fault for somehow missing this. Let me rethink this plan” yada yada yada.

Most recently for me this was re Beanie ODM (think Pythonic Mongoose) - specifically Motor (the old community-led async driver for MongoDB in Python) and Pymongo (the official? sync driver), because the two technically merged under one sync/async banner and I think the MongoDB team is working on it now anyway (along with the Django MongoDB driver). But a lot of old references remain online to Beanie back when it needed Motor which was I think pre-mid 2024? (Just like Meteor v3…)

That said I’ve still seen them cause render loops with React so… sometimes the underlying library is just a pain in the rear or the workflow you’re trying to encode is too poorly defined. I guess that’s where doing a bit of manual coding or being careful with an AGENTS.md or something helps but…

![]()