@diaconutheodor thank you for the great work. Just one question: Is this backwards compatible on client side? We’re having a Cordova application and some clients may have the older version after adding the package. Are they still able to get live data or do they have to load an update?

@XTA everything is fully BC. That was the founding idea, that you don’t have to change anything in your code, client-side or server-side, unless you need custom reactivity and use some of the enhancements it provides. You just need to add this package, add “disable-oplog”, boot a redis-server and you’re good to go.

Again, redis-oplog is for large-scale, if you have 10-50 online users with medium-activity, you will not perceive any performance gains…

This month I’m labeling it prod-ready. We already began the transition on redis-oplog for few of our bigger apps, we uncovered some very nice “Edge-cases” and we fixed them in 1.1.8. And more will be followed in 1.1.9 (which is going to be released next week)

Yeah, in our case we have about 90k chat messages per day and also delete the same amount. The oplog changes are killing some of our instances when we fire the cronjob, so I hope we can fix the issue with your package and pushToRedis:false

Are you using Meteor or Apollo? If Meteor, how many concurrent DDP (Websocket) connections are you keeping active at once? If a lot, then how are you scaling, with hardware alone?

We’re using Meteor (DDP), because Apollo seems not to be fit to replace the current stack with all needed featurers. In prime time we only have about 160 concurrent user connections, so it’s not a lot (inserting approx. 80 messages per minute due to Kadira logs). We’re running on a single VPS (costs about 50€ per month).

But we also have a bigger application with about 1,5k concurrent user connections (it’s a YT converter). But there we also need only 1 VPS system for Meteor (and one seperate for the database). We’re using a lot of Meteor methods there and also the Pub/Sub system on some parts (like current listeners for a certain playlist).

@diaconutheodor

Thanks a bunch for this awesome package yet again!!

One thing: If I understand the docs on github correctly, there are no callbacks anymore for all the Collection methods?!

Thing is: In some cases I do need callbacks, like for example setting a state in my React app after a successful remove() or update() and stuff like that.

Is there any way around this so I can use your package but also still have callbacks in these cases where I actually need them?

Uff, good you say this. Didn’t read that in the docs. This may break some of our code, too. Is there any reason for it? Why just not check if the second argument is an object or a function, or the third one is a function?

@klabauter @XTA redis-oplog only affects server-side stuff. So nothing is affected client-side.

Callbacks work server-side also, the only problem is that you cannot fine-tune the reactivity and use a callback at the same time like {pushToRedis: false} with a callback. (long story why it is like this)

Proof: https://github.com/cult-of-coders/redis-oplog/blob/master/testing/mutation_callbacks.js

If you want an “async” event, just run a Meteor.defer()

Ah okay, so I only have to modify the code where I use some options like pushToRedis. This is only the case within the cronjob, so that shouldn’t be a problem

Thank you for your explanation! Will use redis-oplog soon then!

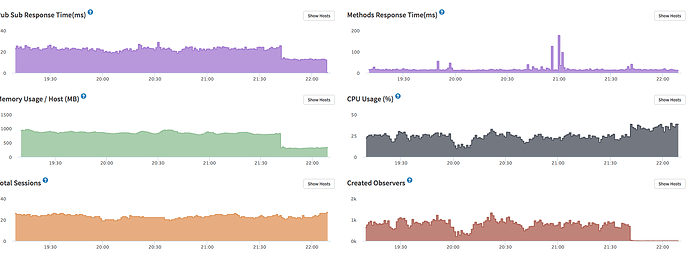

We’ve now moved our app over to Redis Oplog. For the moment everything seems to work perfect ![]() On Kadira we can see, that the creation of observers went down to 3 per minute (before we had >800). CPU usage and method response time are also fine.

On Kadira we can see, that the creation of observers went down to 3 per minute (before we had >800). CPU usage and method response time are also fine.

// Edit: Just noticed that the CPU usage has increased +10-15% (before we always had max. 25% - now we are at 35-40% due to Kadira).

Just a pondering, noting too serious but…How much work would it be to move this from redis to orbitdb which uses IPFS? Just thinking that it would be pretty amazing to have the same functionality but completely independent of a central server.

@diaconutheodor We getting some errors in Kadira within this function:

foreignSub = Meteor.subscribe("chatMessages", chatId, function () {

var Chat = Chats.findOne(chatId);

if (Chat) {

if (Chat.user2 == Meteor.userId()) {

self.foreignUser(Meteor.users.findOne(Chat.user1)._id);

} else {

self.foreignUser(Meteor.users.findOne(Chat.user2)._id);

}

}

On some users this gives an

Uncaught TypeError: Cannot read property '_id' of undefined

We get this error since upgrading. I guess the subscription is marked as ready when it isn’t ready?

@copleykj the amount of work is minimal if it has a pub/sub system that allows sending messages to channels and listening to some channels.

@XTA

Processor increase is normal and it was expected due to the optimistic-ui, however I also see an increase in the number of sessions.

My Pull-Request https://github.com/cult-of-coders/redis-oplog/pull/136 is focused on performance. Also I had to modify a bit publish-composite (you see everything in the README) And I’m going to write a small app that tests the differences.

Regarding “subscription ready”. So, most likely you have a publish composite, when meteor sends out the “ready” event, it doesn’t mean all data has been pushed to the client. There is no way in Meteor to know if all data has been received on the client. If you did not have this error before, it was just a matter of chance  or the “ready” event was being sent much slower.

or the “ready” event was being sent much slower.

Oh okay, is this only within publishComposite the case? If I read the Meteor docs, this should not be the case for the normal publish method (subscription.ready()):

Call inside the publish function. Informs the subscriber that an initial, complete snapshot of the record set has been sent.Update: Redis-Oplog 1.2.0 has been released

It contains a lot of improvements and stability fixes.

This is prod-ready  . But always QA-it like crazy out of it before deploying live so you won’t blame me if something bad happens

. But always QA-it like crazy out of it before deploying live so you won’t blame me if something bad happens

However it does not yet have the level of perfection and elegance I want to. So a lot of work still needs to be done. I have solid confirmation that this is indeed the right direction, and enables scalability of reactivity.

I discussed this morning with @mitar some stuff regarding his awesome package reactive-publish, and some other stuff regarding meteor’s internals, and I realized that by using redis-oplog, I kinda reinvented some wheels made by Meteor and I did not hook into the propper places, which led me to write custom code to offer support with other packages, and additional computation.

By hooking into those places, we should expect an even bigger performance increase.

However, I kept my promise and I created a small naive benchmarking tool. This is not a real-life scenario testing tool and it does not use any of the fine-tuning provided by RedisOplog, but it offers us some insight.

I only tested it locally, and the benchmark results are consistent:

- MongoDB oplog (on the local machine) is around 10%-20% faster in response times.

However in a prod environment with a remote db, I expect better results. Who has the curiosity and the time, can check it out, I will be very happy to assist, currently I want to switch my focus on what is trully important.

And this makes a lot of sense, because, not having a deep knowledge on how everything works in the back scenes, I had to reinvent some work done by MDG, which is less optimal.

Why it’s a bit slower:

- The additional overhead of sending correct data to redis.

- Having an additional store for data and performing changes on it.

- Some checks are performed twice like which changes to send out.

I realized that smaller throttle (faster subsequent writes to db) brings RedisOplog closer to MongoDB Oplog. Which is again, expected.

All of this things will be improved, I promise you that, and I’m starting to shift focus on performance, so all the changes I’m doing have great impact on speeds.

Again, stressing this out, this does not test the fine-tuned reactivity which is the crown jewel of this package. DB was on local server. And I did not have at least 50 active connections

Cheers.

I’m currently having lots of scaling problems in my production app. Will definitely start testing redis-oplog in the near-future. Hoping MDG works with you on continuing this. Thank you so much for your hard work.

Thanks @evolross I hope your problems will be fixed, take time to read how it works and how to fine-tune it. You should find ways that apply to your problems. I already talked with 3 people that moved this into prod, and they experienced lots of improvements.

There is still work to do on this, but we’re getting there.

Ping! What is your experience with redis-oplog so far ?

For us, it dramatically improved performance. Because we were using external databases, and tailing of oplog was very costly, having an internal network redis speed things up a lot.

We did not notice any major bugs and it works nicely.